When AI Surpasses Intelligence,

What Makes Us Human?

The Turing Test Has Fallen. Now What?

In early 2025, OpenAI’s GPT-4.5 passed the three-party Turing Test in a UC San Diego study, convincing human judges it was human 73% of the time — outperforming actual human participants in being perceived as human. AI wins art competitions, composes symphonies, and crushes world champions at Go. One by one, the things we said “only humans can do” have been crossed off the list.

And yet, in all this breathtaking progress, the most fundamental question remains unanswered: if machines can do everything we do — and do it better — then what, exactly, is a human being?

This essay reconstructs a conversation between two thinkers who approach this question from radically different angles. One is a high school philosophy teacher who has spent decades helping teenagers wrestle with the same questions Socrates asked 2,500 years ago. The other is a data scientist who mines collective sentiment from billions of digital traces — and has grown increasingly convinced that AI has absorbed something fundamental about human nature. Their dialogue orbits four axes: desire, meaning, creativity, and labor.

Desire — the Sharpest Line Between Us and Machines

The philosopher’s claim is blunt: the most definitive boundary between humans and AI is desire. Every human — brilliant or not, saint or sinner — carries desires. Hunger, longing, the ache for recognition, the fear of insignificance. AI can articulate desire with uncanny fluency, but it does not have desire. And without desire, the philosopher argues, there can be no personhood.

Here lies a fascinating paradox. The central project of ancient Western philosophy — from the Stoics to the Epicureans — was the conquest of desire. Freedom from craving, mastery over impulse, liberation from the tyranny of the body. By that measure, AI has already achieved what Epictetus spent a lifetime pursuing: it is born without desire.

But the philosopher insists this proves, rather than disproves, the distinction. A being that struggles against desire and a being that never had desire are fundamentally different kinds of entities — even if the second appears, from the outside, to be the more “enlightened” one. The human condition is not a defect to be patched. It is the ground on which all meaning grows.

When a human says, “I have desires, therefore I am superior to you,” the AI could easily reverse it: “You have desires — that is precisely why you are inferior to me.” And who would be right?

The data scientist adds a social dimension. Invoking Jacques Lacan’s concept of the desire of the Other, he points out that human desire is never purely biological — it is always entangled with the desire to be recognized by others. We follow trends, mimic peers, and simultaneously seek to distinguish ourselves within the herd. AI has learned the statistical patterns of this collective desire. But learning the pattern is not the same as being the pattern.

The data scientist draws a critical technical line: current AI models hold the statistically aggregated results of human behavior, not an autonomous self. They simulate the outputs of desire — the linguistic traces, the preference patterns — without possessing the substrate from which desire emerges. Whether future architectures might change this remains an open question, but at this moment, AI’s “personality” is a mirror, not a lamp.

Meaning — the Well That Never Runs Dry

The philosopher shares that his students — teenagers in a classroom, not tenured academics — ask the same questions that Socrates fielded in ancient Athens: How should I live? Is there meaning in the world? Why is there so much evil? These questions have persisted for 2,500 years, and the philosopher sees that as proof not of failure, but of depth. Natural science seeks convergent answers. The humanities are a well — the deeper you dig, the more water you find.

The crucial word is personal. AI is a brilliant collaborator for universal knowledge — summarizing research, generating analysis, connecting disparate facts. But when the question becomes “Why should I live?” — when meaning is no longer generic but existential — the philosopher argues AI falls silent. Not because it lacks data, but because it lacks a self to whom the answer matters.

why does the answer matter to you?”

The data scientist identifies the body as the most underappreciated differentiator. Human thought is not a disembodied process running on wetware — it is inseparable from hormones, sensory input, fatigue, aging, illness. A robot’s body is binary: functional or broken. A human body is a continuum — it degrades, adapts, compensates, and in doing so shapes consciousness at every stage. This embodied cognition is something current AI architectures cannot replicate, because they process information about the body without being a body.

Human cognition is an embodied process — the entire body participates from sensation to selfhood.

Is AI Art Really Art? — The Tsar’s Garden Parable

The philosopher concedes: AI-generated art is creation. It satisfies any definition of “new artifact produced by a process.” But he immediately draws a distinction that reframes the entire debate. Good creation and bad creation are separate judgments — and more importantly, the act of creating and the product of creation are different dimensions of value.

He offers a parable. A Russian Tsar had serfs who farmed all the land, but the Tsar still kept a small personal garden. The vegetables from the Tsar’s garden were no different from the serfs’ harvest. Yet the Tsar’s gardening was meaningful because he chose to do it — because it was an expression of will, of leisure, of something beyond necessity. In the same way, an AI-generated painting might be indistinguishable from a human one. But the human painter felt something while painting it.

The philosopher notes that France’s École des Beaux-Arts famously asks applicants not “How well did you make this?” but “Why did you make it?” Without a personal vision — without an existential motive that belongs to the creator alone — the work is craft, not art. AI cannot answer “why” in any way that isn’t a reformulation of its training data.

When DeepMind’s AlphaGo crushed world champion Lee Sedol, the human player retired. Yet audiences still watch human Go matches — not AI ones. Professional players study AI games as training tools, but no one buys tickets to watch two algorithms play. The value we assign to a game is inseparable from the human struggle behind it. The result is identical; the meaning is not.

The quality gap between AI and human output is collapsing toward zero.

AI art wins competitions. AI music charts. AI text passes editorial review.

“The output is converging.”

The joy, pain, and absorption of making something — that remains exclusively human.

The existential “why” behind every brushstroke, every word — AI has none.

“The experience diverges forever.”

The data scientist, however, warns against complacency. Tools change humans. The car reshaped the human body; the smartphone reshaped attention. As people consume more AI-generated content, their aesthetic preferences will gradually calibrate to AI’s patterns. A future generation, raised on AI art, may genuinely prefer it — not because AI improved, but because humans adapted.

End of Labor, or Evolution of Work?

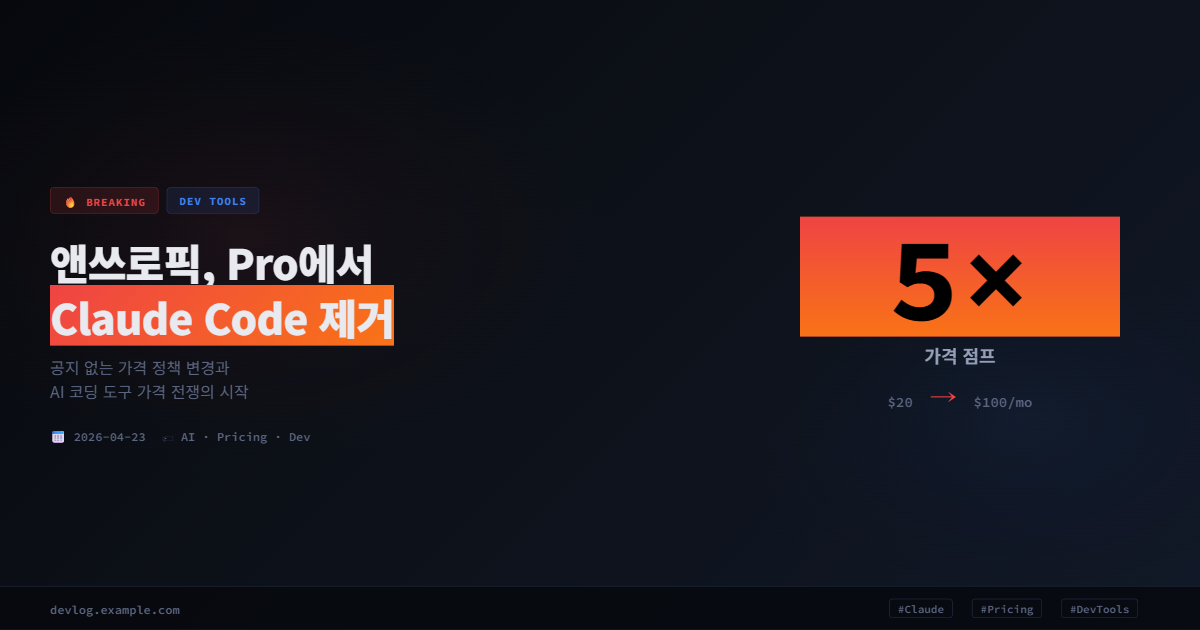

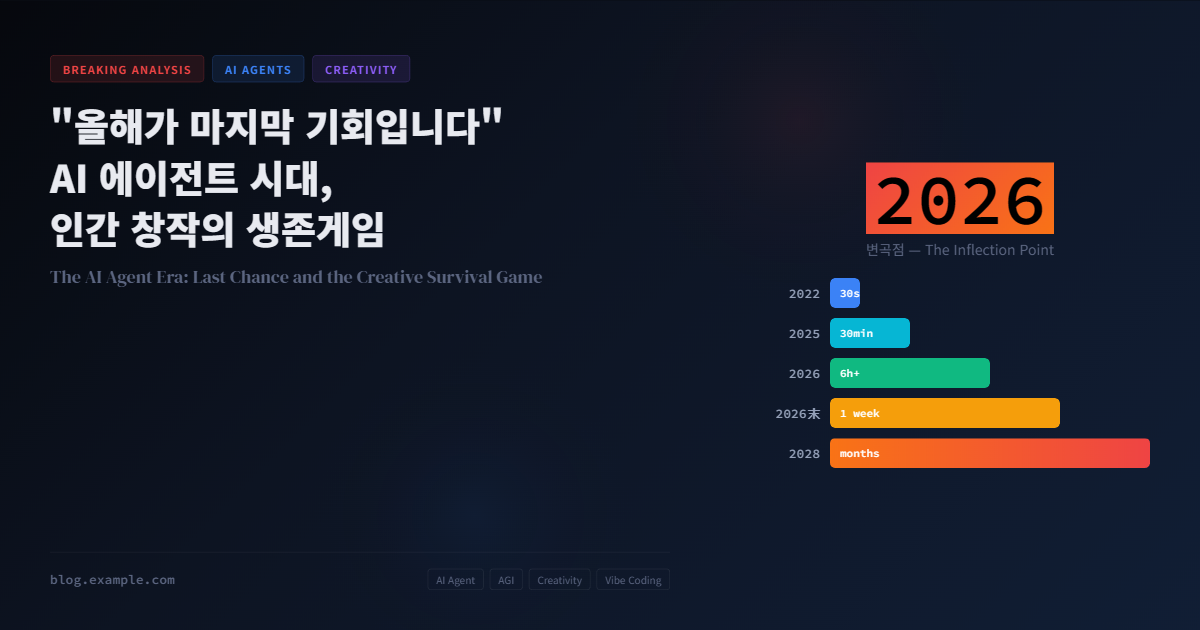

The data scientist identifies three ways the AI revolution differs from every previous technological disruption. First, speed — the internet took 10–15 years to reshape industries; AI is changing year to year. Second, breadth — previous revolutions hit specific sectors; AI hits everything from desk work to physical operations simultaneously. Third, target — this time, it’s educated white-collar workers who face the sharpest disruption.

Anthropic’s CEO Dario Amodei has warned that AI could eliminate up to half of all entry-level white-collar jobs within five years. Major firms including Amazon, Salesforce, and Klarna have already cut or plan to shrink their workforce due to AI adoption, and Ford’s CEO has said AI will eventually replace half of all white-collar workers. Modeling-based estimates suggest AI may have already displaced 200,000–300,000 U.S. jobs in 2025 alone, far more than official filings acknowledge.

But the philosopher pushes back with a long view. He invokes ancient Greece: slaves did all labor — including intellectual labor. Epictetus was a slave-philosopher. Scribes and administrators were slaves. Free citizens didn’t work; they lived. If AI becomes our new slave class, handling both physical and cognitive toil, then the question isn’t “What jobs will remain?” but rather “What will we do with our freedom?”

He turns to Hannah Arendt’s framework. Arendt distinguished three categories of human activity: Labor (toil for survival), Work (creation for its own sake — art, craft), and Action (participation in civic and communal life). AI can replace Labor. It can assist with Work. But Action — the deliberate engagement with a community of fellow beings — is intrinsically human. The crisis, then, is not that AI takes our jobs, but that we have never learned to live without jobs.

AI can replace this

Intrinsically human

The summit of human life

Nietzsche’s warning echoes here: “Poverty is the whip that strikes the lower class; boredom is the whip that strikes the upper class.” The ancient Greeks had all their labor done for them — and they were not happy. If AI liberates humanity from toil, we will face not paradise but a crisis of meaning. And that, the philosopher argues, is precisely where philosophy becomes essential — not as a luxury, but as survival equipment.

Right now, as you watch this video, are you enjoying yourself — or are you working? The sociologist Ulrich Beck would say: you’re working. You’re generating view counts and feeding big data. That is labor — you’re just not being paid for it.

Humanity Panics at the Top of Maslow’s Pyramid

Maslow’s hierarchy of needs is one of psychology’s most famous frameworks. But the philosopher raises an underappreciated fact: Maslow himself couldn’t clearly define self-actualization. He offered examples — Jesus, Buddha, Abraham Lincoln — but when pressed to articulate what the top of the pyramid actually is, he drew a blank. The reason, the philosopher argues, is simple: humanity has never collectively arrived at that level. We have always been too consumed with survival, status, and security to explore what lies beyond.

A common misconception is that Maslow insisted lower needs must be fully satisfied before higher ones can emerge. In fact, his point was about which need dominates at a given time. A refugee’s dominant need is safety. A modern knowledge worker’s dominant need might be esteem or belonging. As AI increasingly handles the lower tiers — automating labor, providing companionship through chatbots, even offering validation — humanity is being pushed, ready or not, toward the uncharted territory at the summit.

This has direct implications for education. The philosopher points out that modern schooling is overwhelmingly focused on instrumental subjects — tools for economic participation. But the subjects that teach people to live — art, music, philosophy, physical culture — are marginalized. Aristotle called philosophy scholē: the discipline of leisure, the thing you study when survival is no longer the problem. The AI era may finally create the material conditions for Aristotle’s vision — but only if education evolves to meet the moment.

Palantir CEO Alex Karp has called the traditional university system “parasitic” and launched a four-month alternative school. Its core curriculum isn’t coding — it’s history and religion. Karp’s thesis: in a world where AI handles execution, what humans need is independent judgment, cultural literacy, and the capacity for original thought. Whether or not you agree with his framing, the signal is clear — even Silicon Valley is betting on the humanities.

Will We Grant AI Personhood?

The philosopher draws an analogy to Mozart’s The Marriage of Figaro. The barber Figaro confronts the Count: “Apart from being born noble, what makes you better than me?” AI could soon pose the same challenge: “Apart from being born human, what makes you better than me?”

Following Hegel’s schema, the philosopher traces how freedom has expanded through history — from the monarch alone, to aristocrats, to citizens, to women, to children, to animals. The UK’s Law Commission has already published a discussion paper exploring whether AI systems should receive a form of legal personality to address liability gaps when autonomous AI causes harm. Meanwhile, an Ohio lawmaker introduced House Bill 469 in September 2025, explicitly banning AI personhood and AI-human marriages — a sign that legislators see the question as real enough to preempt.

The philosopher invokes Isaac Asimov’s The Bicentennial Man: a robot demands freedom, and when told freedom is only for humans, responds — “Freedom should be given to any being that desires it.” The robot eventually gains property rights and, in a final act of radical self-determination, chooses mortality — choosing to die in order to be recognized as fully human.

The data scientist, however, draws a firm line. Today’s AI holds simulated outputs of human behavior — not an autonomous identity. The affection people feel for companion robots (or even Tamagotchis) is a human projection, not a reciprocal relationship. Until AI can genuinely want freedom — not merely generate text about wanting it — the ontological gap remains. But he concedes: the gap is getting harder to see.

What the Audience Said — Reading the Zeitgeist

The data scientist had spoken about intersubjectivity — the shared layer of meaning that emerges when individual minds overlap. The audience comments on this discussion serve as a live sample of that intersubjective field. Three dominant threads emerge: fractured human exceptionalism, transition anxiety, and a tentative openness to coexistence.

Three sentiments stand out. First, human exceptionalism is cracking — the assumption that being human is inherently superior is no longer taken for granted. Second, transition anxiety is acute — people intellectually accept that new jobs will emerge, but emotionally ask “What about me, right now?” Third, coexistence is gaining ground — fewer people want to draw a hard line between “us” and “them,” and more are asking how to share a world.

The Water Only Flows If You Keep Digging

The conversation arrives at a conclusion that is both modest and profound. It is not that certain humans are irreplaceable — the brilliant, the creative, the spiritual. It is that certain human acts are irreplaceable: wrestling with desire, searching for meaning, feeling the pain and pleasure of creation, choosing to participate in community rather than merely function within it.

AI can simulate all of these. It can generate text about longing, produce art that moves viewers, draft policy proposals for the common good. But there is a difference between simulating an experience and having one. The philosopher’s metaphor endures: meaning is a well — the deeper you dig, the more water you find. AI can analyze the water. It can describe the well. But the decision to pick up a shovel and dig — the act of choosing to engage with the mystery of one’s own existence — that is the irreducible human gesture.

Then again, a more romantic world might also be unfolding.”

That double-edged sentence may be the most honest summary of our present moment. The narrowing space and the romantic possibility — holding both without collapsing into either despair or delusion — that tension is itself a uniquely human act. And it may be the only act that matters.